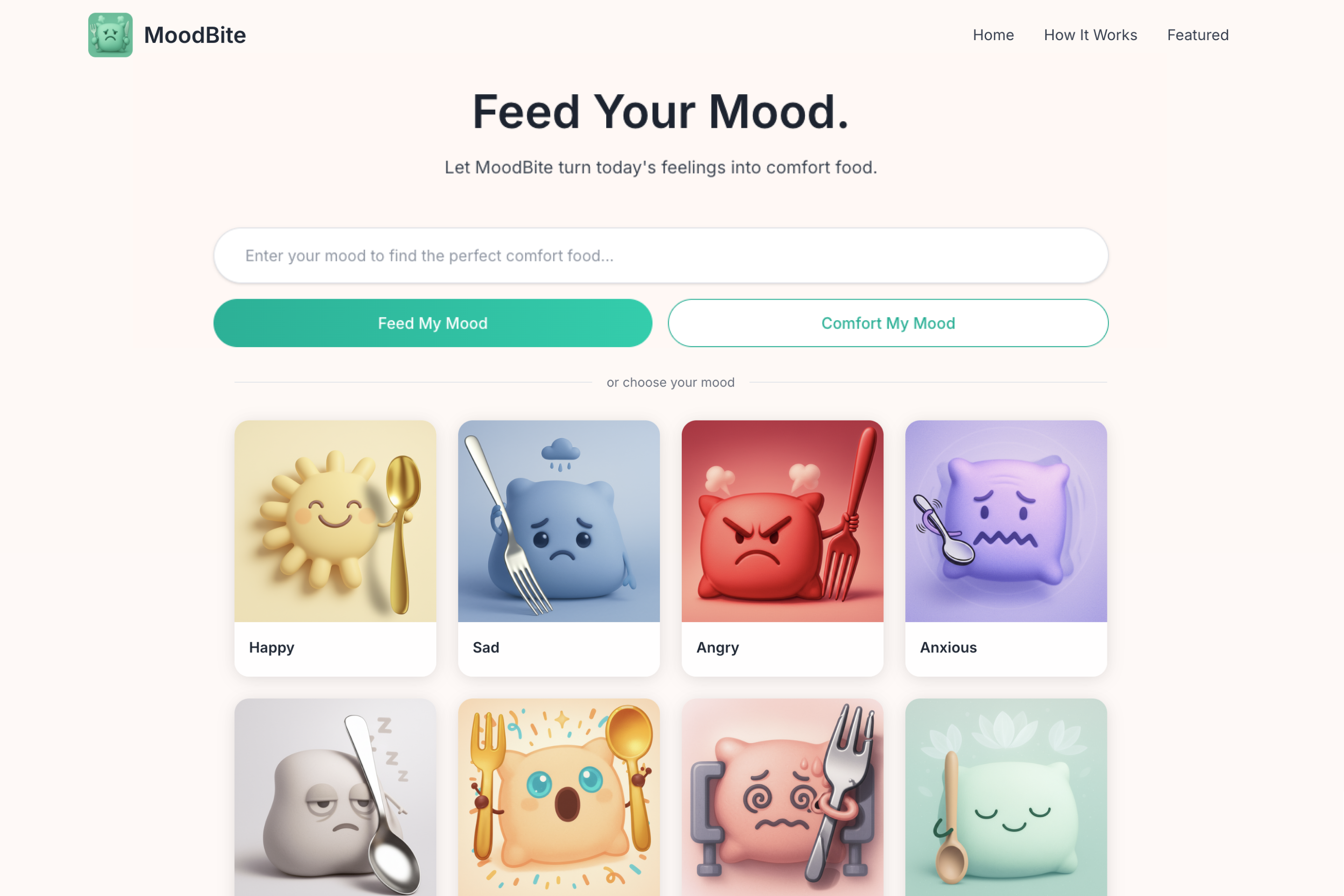

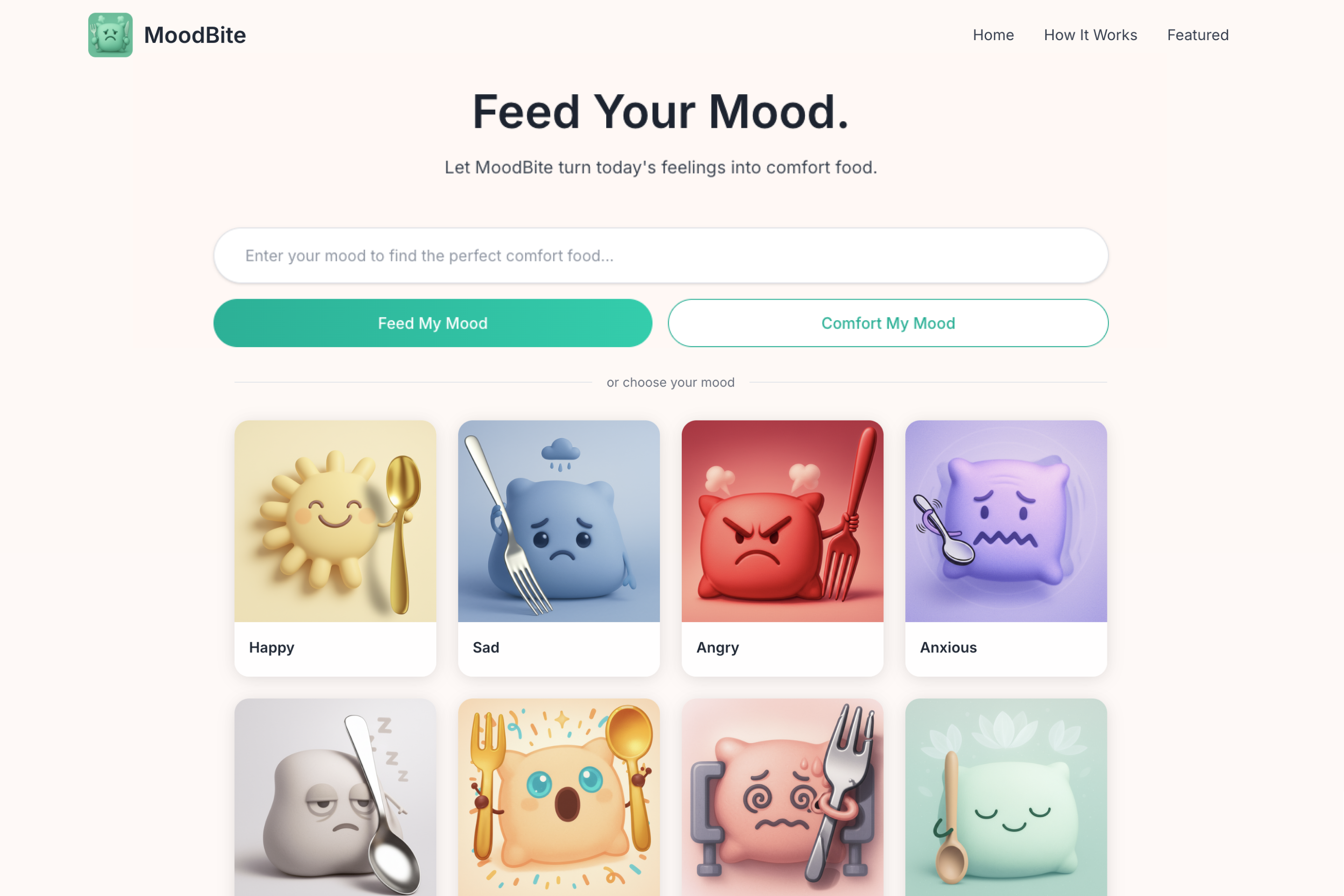

MoodBite

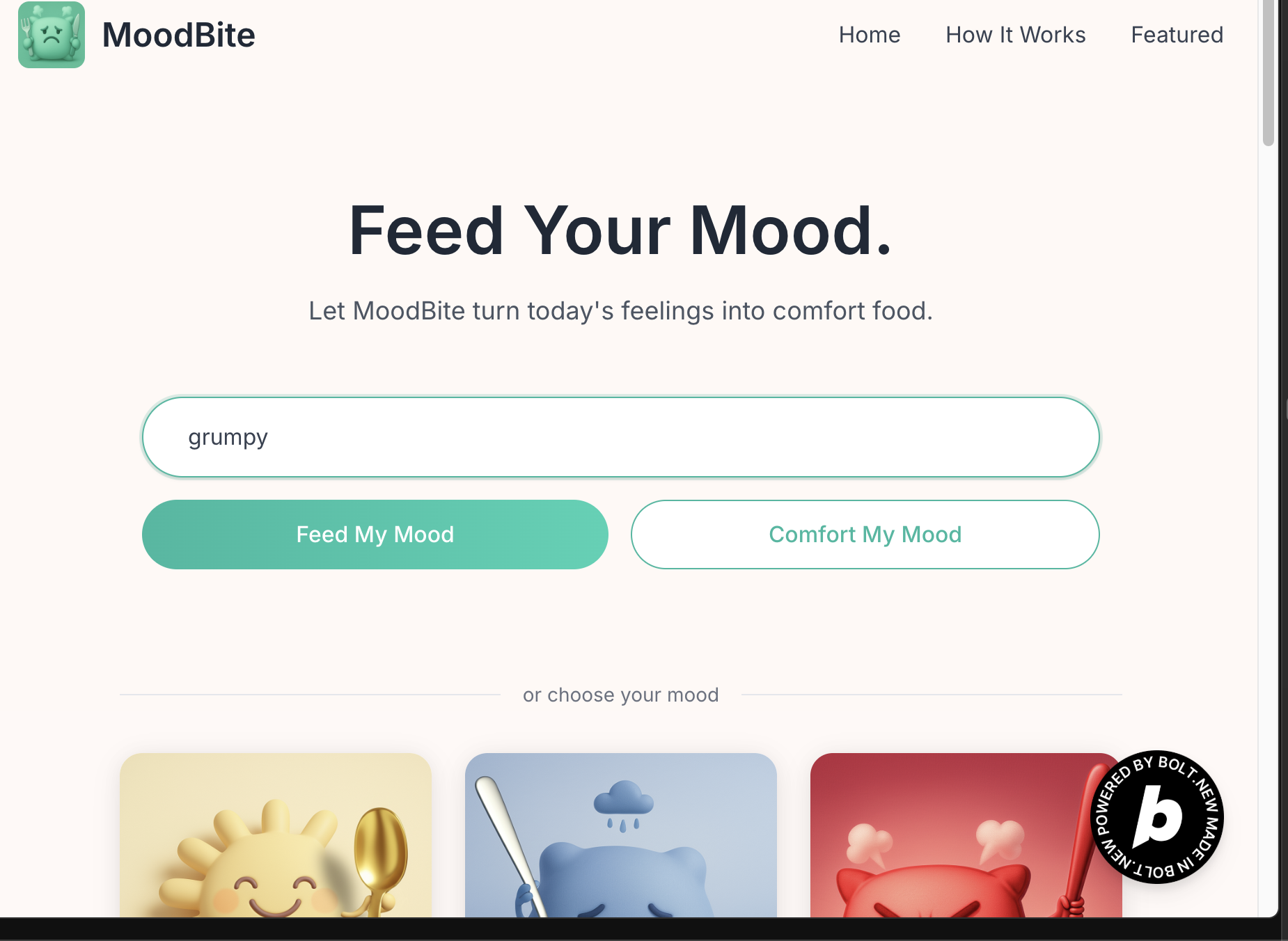

MoodBite is a concept prototype built during the June 2025 Bolt Hackathon. It’s an AI-powered food suggestion app that turns emotions into comforting food ideas. I led the design, AI flow, and MVP build within the hackathon timeframe.

The system uses a Hugging Face emotion classification model to analyze the input, then triggers GPT-3.5 via a prompt to recommend a food and generate a kind message. I also added a fallback system if the emotion classifier’s confidence score is too low, we show a default suggestion. We also collect thumbs up/down to help refine the system over time.

The idea started when I caught myself scrolling through food delivery apps just to feel better. It made me realize how often we eat emotionally, sometimes even before we can name what we feel. Through interviews and reflection, I found that many people crave emotional validation before making food choices.

During a low moment, I caught myself scrolling food apps for comfort. That moment revealed something deeper: we often eat our feelings before we even name them.

Through quick user interviews and observations, people often seek comfort food when feeling off, but most apps ignore emotional context. I saw a chance to connect mood and food in a more human way using AI.

MoodBite turns your emotion into a food suggestion. Users either tap on a feeling or describe it. The AI detects the mood, suggests a comforting dish, and shows a matching image — either generated or pulled from a preset. Users can save moods and build a food-emotion diary.

With no AI background, I used Bolt’s vibe coding to describe the emotion-to-food flow in plain language. Hugging Face handled mood detection from text, and Stable Diffusion generated food visuals.

I applied AI only where it enhanced emotional value, then added fallback logic and a comfort mode to keep the experience reliable. The hardest part? Knowing when not to use AI.

During the hackathon, I faced three key limitations:

To keep the demo functional and responsive, I introduced two fallback strategies:

✅ Preset image pool: Used Pexels stock images mapped to common emotions

✅ Prompt refinement: Adjusted and simplified prompts using specific emotion-food keywords to reduce model confusion

To deliver a smooth and emotionally resonant experience, I made a few critical decisions:

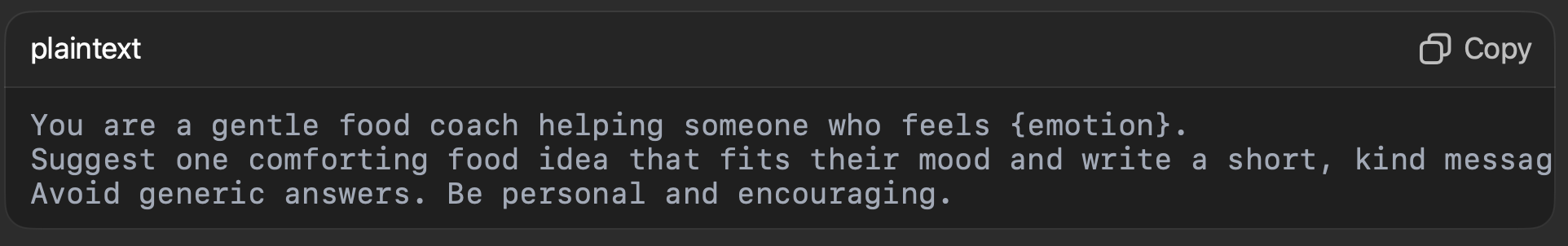

I created emotion-specific prompts to guide GPT-3.5 in generating food suggestions and kind messages. Each prompt was tuned to reflect the user’s emotional state — balancing clarity, empathy, and specificity.To improve reliability, I added emotion keywords and fallback logic when model output was too vague or off-topic.

You are a gentle food coach helping someone who feels {emotion}.

Suggest one comforting food idea that fits their mood and write a short, kind message in a warm tone.

Avoid generic answers. Be personal and encouraging.

I used warm tones, rounded edges, and a floating pillow icon to signal softness and emotional safety. Every UI element from the input field to the image reveal was designed to feel calm, non-judgmental, and quietly comforting

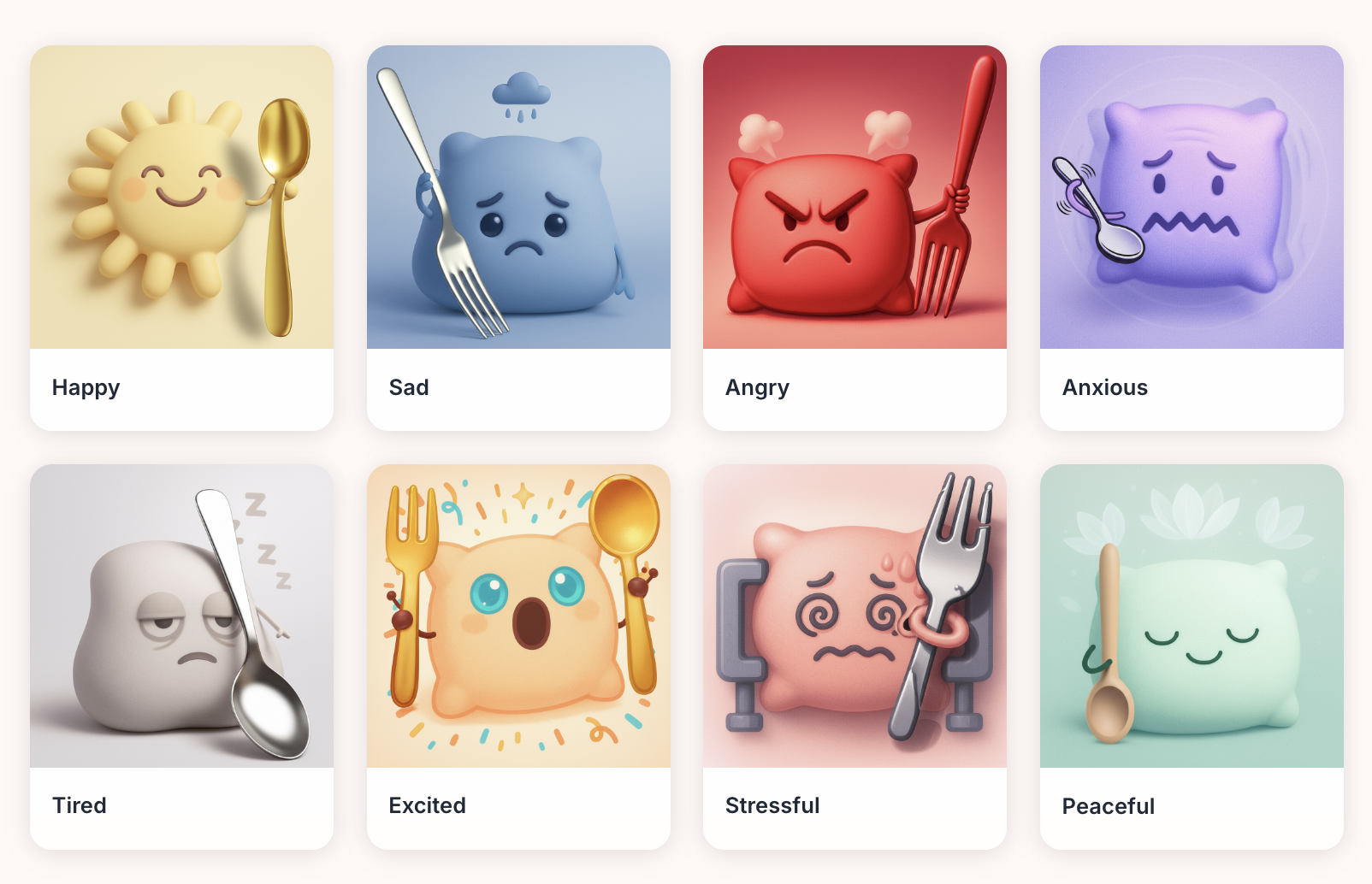

The Pillar Buddy — Mascot Concept

The pillow icon became a visual anchor for rest and emotional ease. A soft, rounded pillar holding a fork and knife. The mascot became a brand anchor during onboarding and mood check-in

After testing, I renamed the button to “Comfort My Mood” for clearer intent. I also improved emotion detection with OpenAI for better spelling and fallback support — so even mistyped or vague inputs could still generate relevant suggestions.

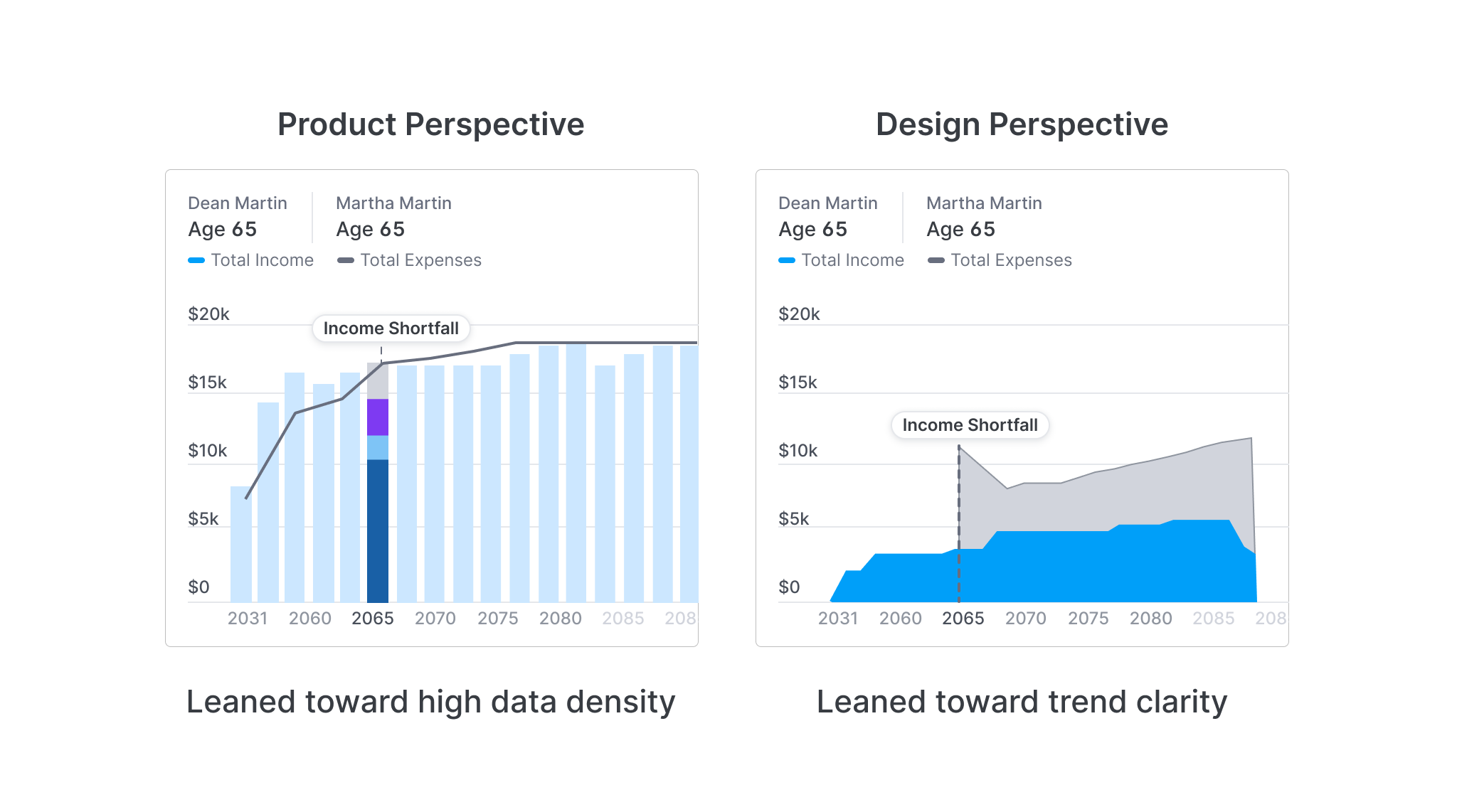

To solve this, I simplified the visual: the chart now shows income vs. expenses at a glance, while detailed income types are revealed in the list below.

This was my first AI project, and I realized it’s not just about models, it’s about decisions. Knowing when AI adds value, how to guide its limits, and how to protect the emotional experience was the real design challenge. MoodBite reminded me that small ideas can carry big feelings.